Aleksander Roszig

April 19, 2026 | 7 min Read

Aleksander Roszig

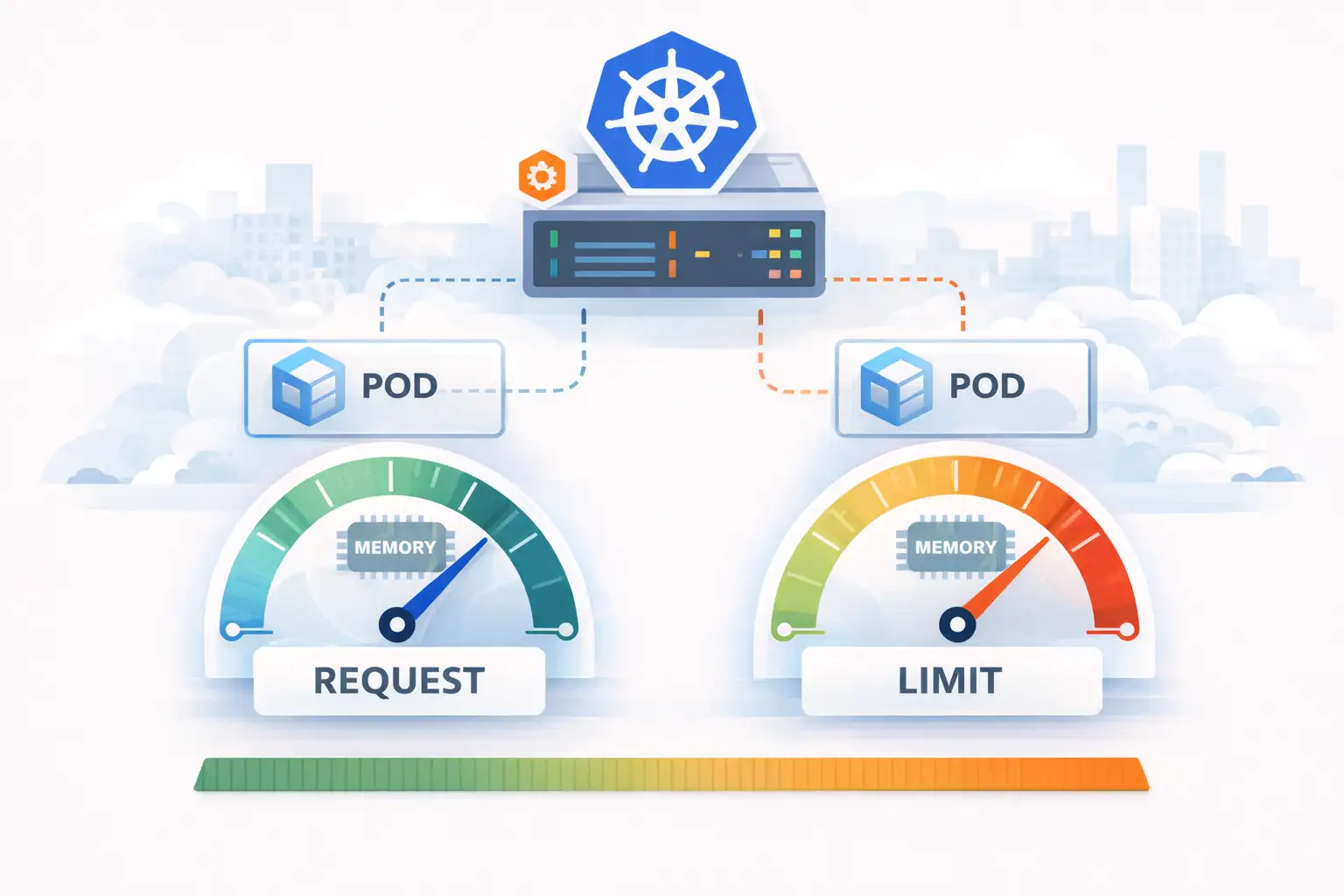

April 19, 2026 | 7 min ReadKubernetes Management: Memory Request and Limit in Practice

This is the second part of an article series about resource management in Kubernetes. In the first part, we discussed how Kubernetes manages CPU, and in this part we focus on memory.

How does Kubernetes manage memory?

From the previous part (Kubernetes CPU request and limit) we learned that Kubernetes uses cgroups for resource management, so now we can take a closer look at how this works for memory. We will focus on cgroups v2, because they have been available since kernel version 4.5, meaning they are enabled by default in distributions such as Ubuntu 16.04, Red Hat Enterprise Linux 9 (available since version 8), and Amazon Linux 2023. It is worth noting that cgroups v2 itself offers memory.min, which allows guaranteeing a minimum amount of memory for a process (v1 had no such mechanism). However, in the default Kubernetes configuration, memory request is used only by the scheduler - regardless of cgroups version. Kubelet does not pass the request value to the kernel as memory.min, so a process in a container can still be reclaimed or OOM-killed even if its usage is within the declared request. Only the MemoryQoS feature gate (alpha since 1.22, still alpha and disabled by default in 1.36) makes kubelet map requests.memory to memory.min in cgroup v2. The feature remains alpha due to potential kernel livelock under aggressive allocation near memory.high - it requires kernel >= 5.9 and a supported runtime (containerd/CRI-O).

In the memory controller documentation https://www.kernel.org/doc/Documentation/admin-guide/cgroup-v2.rst it says the controller tracks utilization of:

- Userland memory - page cache and anonymous memory.

- Kernel data structures such as dentries and inodes.

- TCP socket buffers

And it provides functions such as:

- memory.current - shows current memory usage by processes in the cgroup

- memory.min - allows guaranteeing a minimum amount of memory for a process (memory request), “hard protection”

- memory.low - sets a threshold that will not be reclaimed as long as reclaimable resources exist in another cgroup that has no memory.low or is above memory.low, “best effort memory protection”

- memory.high - defines a threshold above which the OS starts slowing down new allocations for the cgroup, but does not trigger OOM kill yet

- memory.max - defines the maximum amount of memory the process can use. If the cgroup exceeds this limit, a process inside that cgroup is killed by the OS due to out of memory (OOM kill).

Memory Request - memory.min

By default memory request is used only by the scheduler. Memory request combined with feature MemoryQoS is mapped to memory.min in cgroups v2. This means it is the minimum amount of memory guaranteed to a cgroup - this memory will not be reclaimed by the kernel as long as usage stays within that boundary. If we set in a Deployment:

resources:

requests:

memory: 500m

then - with the MemoryQoS feature gate enabled - kubelet passes this value to the container runtime (containerd / CRI-O) via the Unified field in CRI, and the runtime sets memory.min in the container cgroup. This is done by the ResourceConfigForPod function in kubelet:

func ResourceConfigForPod(allocatedPod *v1.Pod, enforceCPULimits bool, cpuPeriod uint64,

enforceMemoryQoS bool) *ResourceConfig {

// ...

if enforceMemoryQoS {

memoryMin := int64(0)

if request, found := reqs[v1.ResourceMemory]; found {

memoryMin = request.Value()

}

if memoryMin > 0 {

result.Unified = map[string]string{

Cgroup2MemoryMin: strconv.FormatInt(memoryMin, 10),

}

}

}

// ...

Note that request.Value() returns the value in bytes, so in our example memory.min will be 524288000 (that is 500 * 1024 * 1024), not the literal “500Mi” written in the cgroup file.

The enforceMemoryQoS parameter in kubelet code comes from the MemoryQoS feature gate, which is disabled by default. This means that in standard Kubernetes configuration:

- memory request is not passed to the kernel as memory.min - the kernel does not receive this information at all

- memory request is used only by kube-scheduler for Pod placement decisions

When MemoryQoS is enabled and kubelet sets memory.min on the Pod cgroup, the kernel treats this value as a hard guarantee: as long as cgroup memory usage stays within the effective min boundary, its pages are not reclaimed under any condition - even during node memory pressure. If the kernel cannot keep this guarantee, it invokes the OOM killer, which may terminate processes outside the protected cgroup to free required memory. This is a fundamental difference from the default setup, where a Pod with request 512Mi under node memory pressure is just another candidate for reclaim and eviction.

Memory Limit - memory.max

Memory limit in Kubernetes maps to memory.max in cgroups v2 (equivalent to memory.limit_in_bytes in cgroups v1). This is a hard memory limit a cgroup can use - if its processes try to allocate more, the kernel invokes the OOM killer, which kills process(es) in that cgroup.

If we set in a Deployment:

resources:

requests:

memory: 250Mi

limits:

memory: 500Mi

then kubelet in ResourceConfigForPod gets the limit in bytes and writes it to ResourceConfig:

if limit, found := limits[v1.ResourceMemory]; found {

memoryLimits = limit.Value()

}

// ...

result.Memory = &memoryLimits

This value is then passed to the container runtime (containerd / CRI-O) via CRI, and the runtime sets it as memory.max in the container cgroup and an aggregated limit in the Pod cgroup. Again, limit.Value() returns bytes, so 500Mi is written to cgroup as 524288000.

Key detail: the limit is set conditionally - depending on QoS class

Here is the most interesting part of ResourceConfigForPod. Unlike memory request (which, when enforceMemoryQoS is enabled, is always set when present), memory limit in cgroup is set only when Kubernetes has a meaningful value to write - and that depends on Pod QoS class:

func ResourceConfigForPod(allocatedPod *v1.Pod, enforceCPULimits bool, cpuPeriod uint64, enforceMemoryQoS bool) *ResourceConfig {

// ...

// determine the qos class

qosClass := v1qos.GetPodQOS(allocatedPod)

// build the result

result := &ResourceConfig{}

if qosClass == v1.PodQOSGuaranteed {

result.CPUShares = &cpuShares

result.CPUQuota = &cpuQuota

result.CPUPeriod = &cpuPeriod

result.Memory = &memoryLimits

} else if qosClass == v1.PodQOSBurstable {

result.CPUShares = &cpuShares

if cpuLimitsDeclared {

result.CPUQuota = &cpuQuota

result.CPUPeriod = &cpuPeriod

}

if memoryLimitsDeclared {

result.Memory = &memoryLimits

}

} else {

shares := uint64(MinShares)

result.CPUShares = &shares

}

result.HugePageLimit = hugePageLimits

if enforceMemoryQoS {

memoryMin := int64(0)

if request, found := reqs[v1.ResourceMemory]; found {

memoryMin = request.Value()

}

if memoryMin > 0 {

result.Unified = map[string]string{

Cgroup2MemoryMin: strconv.FormatInt(memoryMin, 10),

}

}

}

Guaranteed - all containers in the Pod have declared requests == limits for CPU and memory. Kubelet unconditionally sets result.Memory = &memoryLimits, so the Pod cgroup gets a concrete memory.max.

Burstable - at least one container has some request or limit, but conditions for Guaranteed are not met. Here memoryLimitsDeclared becomes important. It is set to true at the beginning of the function, but changed to false by ContainerFn when any container has no declared memory limit (res.Memory().IsZero()):

BestEffort - no container has requests or limits. The code goes into the else branch and sets only minimal CPUShares (MinShares, which is 2 - the lowest possible value in cgroups). result.Memory is not set at all, so the Pod cgroup has no own memory.max. The Pod can use memory as long as it is available - it is constrained only by the kubepods-besteffort.slice cgroup limit and by being first in eviction order when the node is under pressure.

Summary

Memory management in Kubernetes is more complex than CPU management, mainly because of cgroups v1 vs v2 implementation differences and because MemoryQoS - the feature that allows kernel-level enforcement of memory request - is still alpha and requires deliberate enablement.

It is worth remembering several practical conclusions from analyzing ResourceConfigForPod:

- Memory request without MemoryQoS is only a hint for the scheduler - the kernel has no idea a Pod “requested” some memory. Only enabling the MemoryQoS feature gate makes the request reach cgroup as memory.min and become a real guarantee.

- Memory limit behaves differently depending on QoS class - Guaranteed always gets memory.max at Pod cgroup level, Burstable only when all containers have declared limits, and BestEffort never gets its own limit and is constrained only by parent cgroup

kubepods-besteffort.slice. - QoS class is derived from resources configuration, not set directly - one missing limits.memory in one container can move the whole Pod from Guaranteed to Burstable and change its behavior under memory pressure and eviction priority.

- OOM killer in cgroups v2 with Kubernetes 1.28+ kills the entire container process group at once (

memory.oom.group=1), not a single process with the highest oom_score - an important behavioral change for applications with multiple processes in one container (for example sidecars, workers, PostgreSQL).

Practical consequence: if you set only requests without limits expecting a memory “reservation” - it does not work the way it may seem. Without MemoryQoS, the kernel has no knowledge of your requests, and a neighboring Pod in the same QoS class can consume that memory before your workload allocates it.

If you want to validate your Kubernetes architecture, eliminate throughput throttling issues, or improve cluster performance, our team offers comprehensive Kubernetes consulting based on real-world experience.